⚖️ LAGE-tool

Local AI Inference GPU Economics

Compare consumer GPUs, data center cards, and AI systems for running LLMs locally or in the cloud.

NVIDIA RTX

AMD ROCm

Intel ARC

Cloud

DGX Spark

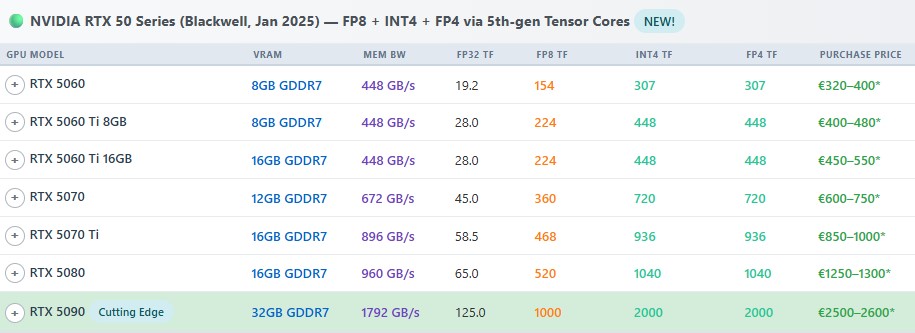

📊 Full Comparison Table

Search, filter, and sort 60+ GPUs. Compare VRAM, bandwidth, TFLOPS, prices, and LLM token speeds side-by-side. Add up to 6 GPUs to a compare view.

Filter by series

Sort any column

Side-by-side compare

Consumer + cloud + eBay

Open comparison table →

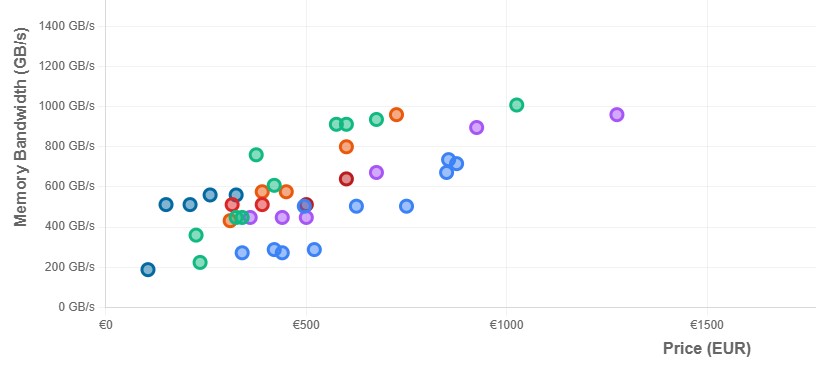

📈 Price vs Bandwidth & VRAM

Interactive scatter charts. See which GPUs offer the best value: high bandwidth or VRAM for the price. Click the legend to filter by vendor.

Price vs bandwidth

Price vs VRAM

Click to filter

Hover for details

Open charts →